What Is Explainable AI (XAI) and Why It Matters

Explainable artificial intelligence (XAI) is widely used to describe an AI model, its expected impact, potential biases, and for ensuring transparency and trust.

Artificial Intelligence (AI) is transforming how organizations operate and how individuals utilize technology in their daily lives. These days, AI is utilized in a wide range of applications, from assisting physicians in identifying and diagnosing illnesses to making movie recommendations on streaming services. As a result, AI systems are increasingly involved in making decisions that impact our day-to-day existence.

However, as AI systems develop, a new issue emerges. A lot of AI models operate like "black boxes." They can provide outcomes, but it's frequently difficult to comprehend how they arrived at those conclusions. People find it hard to trust the system when they can't discern why a decision was made.

Explainable AI (XAI) comes into play here. The goal of explainable AI is to facilitate human comprehension of artificial intelligence (AI) systems. It helps in showing the process and rationale behind a system's choice. This article will define explainable AI, discuss its significance, and discuss how it can contribute to the development of more trustworthy and responsible AI systems.

The Growing Influence of Artificial Intelligence

Artificial intelligence has transitioned from research labs to everyday applications throughout the last decade. Many of the services we depend on today are powered by machine learning models, including:

-

Personalized recommendations in e-commerce and streaming platforms

-

Fraud detection systems used by banks

-

Medical diagnosis tools that assist healthcare professionals

-

Autonomous driving technologies

-

Customer service chatbots and virtual assistants

These technologies are able to analyze massive volumes of data and spot patterns that people might overlook. Because of this, businesses are relying more and more on AI to automate decision-making.

Even though these systems can be very accurate, they frequently have very complicated internal mechanisms. Deep learning models, in particular, might have millions or even billions of parameters. Because of its intricacy, it is challenging to comprehend how a model came to a specific conclusion.

The “Black Box” Problem in AI

It's common to refer to many contemporary AI systems as "black-box models." This suggests that although the system's input and output are visible to us, its core logic is not.

The applicant, and occasionally even the developers, might not understand the model's reasoning if it is not explainable. Several issues are brought up by this lack of transparency:

-

Customers may feel the decision is unfair.

-

Organizations may struggle to justify decisions to regulators.

-

Hidden biases in the data may lead to discriminatory outcomes.

It can be dangerous to rely on algorithms that are unable to explain their conclusions in high-stakes industries like healthcare, banking, and law. People must comprehend both the outcome and the logic behind it.

What Is Explainable AI (XAI)?

The term "explainable AI," which is frequently shortened to "XAI," describes strategies and tactics that improve the transparency and human comprehension of AI systems.

In simple terms, Explainable AI helps answer questions like:

-

Why did the model make this prediction?

-

Which factors influenced the decision the most?

-

How confident is the model in its output?

-

Would the decision change if certain inputs were different?

Explainable systems not only provide outcomes but also show the decision-making process. This makes it easier for humans to comprehend the significance of artificial intelligence in practical contexts, such as when it uses variables like blood pressure, cholesterol, and age to forecast heart disease.

Why Explainable AI Matters

As AI systems are utilized in crucial decision-making processes, explainable AI is becoming more and more significant. These are some of the main explanations for why XAI is important.

1. Building Trust in AI Systems

Adoption of AI technologies depends on trust. Users are more inclined to believe a system's recommendations when they comprehend how it functions and the reasons behind its conclusions.

For instance, if an AI-based diagnosis tool explains the medical factors underlying its prognosis, a clinician is more inclined to trust it.

Explainability aids in bridging the knowledge gap between humans and sophisticated algorithms.

2. Detecting Bias and Ensuring Fairness

Artificial intelligence (AI) algorithms pick up knowledge from past data. The model may inadvertently reproduce or even magnify any biases present in the data.

By identifying the characteristics that affect judgments, explainable AI helps in the identification of such issues. This facilitates the identification of discriminatory or unfair trends in AI algorithms.

For example, explainability tools can assist in identifying the causes of a hiring algorithm's bias if it consistently favors particular demographic groups.

3. Improving Model Performance

Explainability helps developers create better AI models in addition to being advantageous for consumers.

By understanding how models make decisions, developers can:

-

Identify incorrect assumptions in the model

-

Detect data quality issues

-

Refine features that improve prediction accuracy

In other words, explainability provides valuable insights into the model’s behavior, helping teams build better systems.

4. Meeting Regulatory and Compliance Requirements

Governments and regulatory agencies are enacting regulations to guarantee accountability and transparency in automated decision-making systems as the use of AI increases.

Organizations may be forced to explain how their AI systems make judgments in industries like healthcare and finance. By using AI Concepts, explainable AI helps businesses clearly understand and present how their systems make decisions, assisting them in fulfilling these regulatory obligations.

How does Explainable AI work?

Explainable AI (XAI) operates by making AI models' decision-making clear and intelligible to people. XAI offers insights into how and why a model reached a specific prediction or choice rather than only providing an answer.

1. Interpretable Models

Certain models, such as decision trees or linear regression, are easy to comprehend and display distinct, sequential decision routes.

2. Post-Hoc Explanations

Without changing the underlying AI system, explanations for complex models are produced following forecasts to demonstrate thinking.

3. Feature Importance Analysis

This method clarifies the factors that influenced the model's choice by identifying the input features that had the greatest impact on a prediction.

4. Visualization Techniques

Saliency maps and heatmaps are examples of visual tools that display whether aspects of input data, particularly in text or images, affect predictions.

5. Local Explanation Models

Individual predictions are the subject of methods like LIME, which explain why the AI chose a particular course of action for a single instance.

6. Model-Agnostic Tools

Regardless of the inherent complexity or design of an AI model, techniques like SHAP consistently explain it.

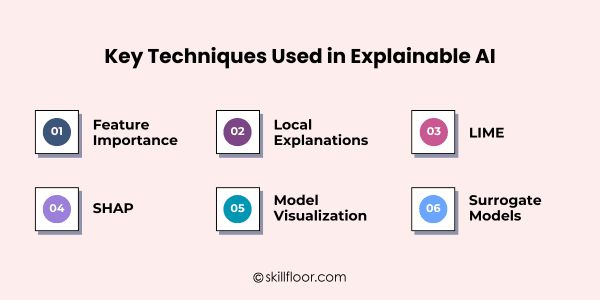

Key Techniques Used in Explainable AI

To make AI models simpler to comprehend, researchers and engineers have created a variety of methods. Among the techniques that are most frequently employed are:

1. Feature Importance

The input variables that have the greatest influence on a model's prediction are displayed via feature importance techniques. For instance, in a credit scoring system, a loan's approval or rejection may be heavily influenced by variables like income level and credit history.

2. Local Explanations

Local explanations concentrate on elucidating specific predictions rather than the model as a whole. They aid users with comprehending why the model generated a particular outcome for a given input.

3. LIME (Local Interpretable Model-Agnostic Explanations)

LIME is a well-liked explainability tool that aids in explaining difficult model predictions. In order to demonstrate which features affected the outcome, it builds a more straightforward model around a particular forecast.

4. SHAP (SHapley Additive exPlanations)

Another popular method that gives each feature an importance rating is SHAP. It aids in illustrating the relative contributions of each input variable to the final forecast.

5. Model Visualization

Charts, graphs, and heatmaps are examples of visualization tools that aid in the explanation of AI models. These images show how various features influence predictions and highlight linkages in the data.

6. Surrogate Models

Surrogate models simulate the behavior of a complicated AI system using a simpler and more comprehensible concept. This facilitates users' comprehension of the original model's decision-making process.

The Benefits of Explainable AI

Adopting explainable AI helps companies create dependable systems that people can comprehend, trust, and confidently incorporate into real-world decisions. These benefits extend well beyond compliance.

1. Increased Trust

Users become more confident when they comprehend AI judgments, which promotes broader adoption and improved human-intelligent system cooperation.

2. Faster Debugging

Without requiring extensive trial-and-error debugging, explainable models enable developers to promptly identify flaws, biases, and unexpected behavior by exposing decision logic.

3. Ethical AI Development

Transparency promotes ethical design, guaranteeing that AI technologies function equitably while lowering the possibility of detrimental or prejudiced automated judgments.

4. Regulatory Readiness

Explainable AI makes it easier for businesses to adhere to changing international laws by providing a clear explanation for automated judgments.

5. Better Decision-Making

Comprehending AI reasoning enables humans to integrate computer insights with knowledge, resulting in more intelligent methods and self-assured conclusions.

6. Stronger Business Confidence

Stakeholder confidence is bolstered by transparent AI systems, which assist companies in implementing innovation responsibly and fostering long-term trust.

Real-World Applications of Explainable AI

Explainable AI is already in use in sectors where users and organizations depend on accountability, transparency, and reliable decision-making.

1. Healthcare

Explainable AI ensures that medical decisions are based on clear insights rather than enigmatic algorithmic outputs by assisting physicians in understanding illness forecasts.

2. Finance

Explainable AI is used by banks to make loan approvals, fraud detection, and risk assessments more transparent while upholding regulatory compliance and fairness.

3. Autonomous Vehicles

Engineers may better comprehend how self-driving systems evaluate road conditions and safely respond to unforeseen impediments with the aid of explainable AI.

4. Hiring and Recruitment

Explainable AI is used by organizations to make sure hiring algorithms assess applicants fairly and steer clear of covert bias in hiring decisions.

5. Insurance

Explainable AI is used by insurance firms to provide rational justifications for pricing decisions, risk assessments, and claim approvals.

6. Cybersecurity

Security teams may investigate suspicious activity more quickly and stop any cyberattacks by using explainable AI to assist them comprehend threat detections.

Challenges of Explainable AI

Explainable AI has many benefits, but putting it into practice isn't always easy. When attempting to make complicated AI systems transparent and intelligible, organizations frequently encounter a number of difficulties.

1. Complexity of Modern AI Models

Millions of parameters make it challenging to understand how judgments are actually made in sophisticated models like deep neural networks.

2. Trade-off Between Accuracy and Interpretability

While advanced versions perform better but are still more difficult to understand, highly interpretable models may compromise predicted accuracy.

3. Lack of Standardized Methods

Because there isn't a common framework for explainability, businesses must use a variety of tools and methods for AI systems.

4. Misinterpretation of Explanations

Users may misinterpret AI explanations and draw false inferences about how the model actually operates.

5. Computational Overhead

Complex model explanations may need more computing power, which would lengthen processing times and raise operating expenses.

6. Balancing Transparency and Data Privacy

Giving thorough explanations could inadvertently reveal private information or secret algorithms, raising security and privacy issues.

The Future of Explainable AI

Explainability will become much more crucial as artificial intelligence develops. Businesses are realizing more and more that developing strong AI systems is insufficient; these systems also need to be transparent and reliable.

Future developments in Explainable AI may include:

-

More intuitive visualization tools

-

Better methods for explaining deep learning models

-

Integration of explainability into AI development frameworks

-

Stronger regulations requiring AI transparency

Ultimately, the goal is to create AI systems that can collaborate effectively with humans, rather than operating as mysterious black boxes.

Understanding not only the results of AI but also the logic behind them is becoming more and more crucial as it continues to influence our lives and workplaces. This gap is filled by Explainable AI (XAI), which makes AI systems more transparent, reliable, and equitable. Examining courses in artificial intelligence can be a great next step for people who want to expand their knowledge and acquire practical skills. This will enable you to work with AI with confidence and responsibility.

AI is transforming our lives and workplaces, but comprehending its choices is just as crucial as the outcomes. Explainable AI (XAI) makes AI systems transparent and trustworthy by illuminating the reasons behind and methods of decision-making. Businesses and individuals can make more intelligent and equitable decisions while avoiding errors or unconscious prejudice by utilizing Explainable AI (XAI). Gaining proficiency with these tools boosts self-assurance and transforms AI from a mystery to a useful partner.

![A Complete Guide to 10 Cybersecurity Domains [2026 Update]](https://s3.us-east-1.amazonaws.com/skillfloor-website/uploads/images/202603/image_870x580_69ba9f637fe64.jpg)