Cost Functions in Machine Learning: Your Easy Guide

Learn how cost functions in machine learning guide model optimization, minimize prediction errors, and drive accurate, successful AI systems across industries.

Many machine learning students ignore an important concept that determines whether a model is successful or unsuccessful. An algorithm starts making predictions when you feed it data, such as predicting what customers might purchase, suggesting movies, spotting fraud, or identifying faces. A silent manual known as cost functions in machine learning is the driving force behind all of this advancement.

A model can better grasp how inaccurate its predictions are and how to make improvements by using cost functions. It's crucial to comprehend machine learning in cost functions if you're studying AI or want to work in data science. The ability of a model to measure and minimize errors is often what distinguishes a powerful model from an average one.

What is a Cost Function in Machine Learning?

In machine learning, a cost function can be used to quantify the discrepancy between a model's predictions and the actual values in a dataset. It helps the model in comprehending its level of performance. The cost decreases as the expected and actual values are closer to each other.

A cost function, to put it simply, responds to a fundamental question: how different are the actual outcomes from the predictions? This information is used by machine learning models to enhance their predictions. In order to reduce costs and improve forecast accuracy, the model continuously modifies its parameters during training.

Example

Consider a model that predicts home values. Let's say the model predicts ₹28,00,000, but the real cost of a house is ₹30,00,000. The difference between these two values is measured by the cost function. In order to minimize this discrepancy and produce more accurate forecasts in the future, the model then modifies its parameters.

Why Cost Functions Are Important in Machine Learning

By quantifying prediction mistakes, cost functions direct models and assist in their training. Recognizing how cost functions in machine learning enable models to learn from errors and provide better outcomes is another aspect of appreciating the importance of machine learning.

1. Measuring Model Performance

By assessing the discrepancy between expected and actual values in data, cost functions help in evaluating the performance of a machine learning model.

2. Guiding Model Training

Cost functions help the model learn by pointing out areas where errors occur during training, allowing it to gradually change its parameters.

3. Enabling Optimization

Cost functions enable optimization algorithms to slowly change variables in the model, assisting the model in improving accuracy and lowering prediction errors.

4. Supporting Model Comparison

Comparing many machine learning models and selecting the best one based on lower error is made simpler by cost values.

Types of Cost Functions in Machine Learning

Different approaches to measuring errors are needed for different kinds of machine learning issues. There are many cost functions in machine learning because of this. Selecting the appropriate cost function guarantees that the model learns effectively and produces precise forecasts. Cost functions can be broadly classified into two categories: regression and classification.

1. Regression Cost Functions

Continuous values, such as temperature, stock prices, and home prices, are predicted by regression models. Regression cost functions quantify the discrepancy between real and predicted numerical values. Typical varieties include:

-

Mean Squared Error (MSE): Determines the mean of the squared deviations between the expected and actual data. severely penalizes major mistakes.

-

Mean Absolute Error (MAE): Determines the average absolute difference between the values that were expected and those that were observed. MSE is more sensitive to outliers.

-

Huber Loss: Handles anomalies while maintaining smooth optimization by combining MSE and MAE.

The model's accuracy for continuous outputs is increased and prediction errors are reduced due to these cost functions.

2. Classification Cost Functions

Classification models predict classes or categories, including spam emails, the existence of diseases, or image labeling. These cost functions concentrate on the accuracy of the model's class prediction. Common varieties consist of:

-

Binary Cross Entropy: Used to measure the discrepancy between actual labels and anticipated probability in two-class issues.

-

Categorical Cross Entropy: Assesses how well projected probability match actual class labels in multi-class issues.

-

Hinge Loss: Predictions that fall inside or outside of the decision margin are often penalized in Support Vector Machines (SVMs).

Models are guaranteed to assign higher probabilities to accurate classifications and lower probabilities to erroneous ones due to classification cost functions.

3. Specialized Cost Functions

Some tasks require custom or task-specific cost functions:

-

Weighted Loss Functions: Give some classes or mistakes more weight; this is helpful for datasets that are unbalanced.

-

Custom Loss Functions in Deep Learning: Created for applications such as reinforcement learning, style transfer, and object detection.

These specialized methods show how cost functions in machine learning direct the model to produce precise predictions in real-world situations, enabling machine learning models to effectively tackle complicated jobs.

How Cost Functions Work in Machine Learning Models

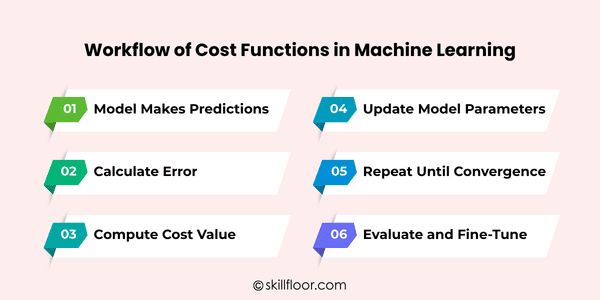

A machine learning model's learning process is centered on reducing the cost function. As a result, the model's forecasts gradually get better. Since it shows how models learn from mistakes to make better decisions, an understanding of machine learning in cost functions is crucial. Here is a condensed, step-by-step process:

Step 1: Model Makes Predictions

The model begins by predicting outputs for the provided input data using its current parameters. These initial predictions are usually not very accurate.

Since the model needs outcomes to compare with real values, the initial step in training is making predictions. The cost function cannot accurately measure errors in the absence of predictions.

Step 2: Calculate Error

The cost function then computes the discrepancy between the expected and actual values. This lets the model know how inaccurate its predictions are.

The model receives a clear indication from the error measurement regarding which predictions are accurate and which want improvement. It is necessary for education.

Step 3: Compute Cost Value

A single cost value is determined by combining the various faults. This figure shows the model's total dataset performance.

A low-cost value implies the model is performing well, while a high-cost value indicates poor predictions. The objective is always to reduce this expense.

Step 4: Update Model Parameters

The model's parameters are changed by optimization procedures, such as gradient descent, to lower the cost. The model is able to learn from its mistakes due to these minor modifications.

The model's predictions get better over time by adjusting parameters. The model approaches the ideal answer with the aid of numerous iterations and repeated updates.

Step 5: Repeat Until Convergence

During training, the prediction, error calculation, cost computation, and parameter updating processes are repeated several times. This keeps happening until the price hits its lowest point.

The model can gradually get better due to this repetition. With each iteration, the cost drops and the predictions are more accurate, indicating that the model is learning efficiently.

Step 6: Evaluate and Fine-Tune

The model is evaluated on fresh data to make sure it functions properly and generalizes outside the training set after training is finished and the expense is low.

Adjusting hyperparameters, experimenting with various cost functions, or changing the model architecture are some examples of fine-tuning. This stage guarantees optimal performance for the machine learning model.

Cost Function vs Loss Function

Although they are similar, the phrases "loss function" and "cost function" are not exactly the same in machine learning. You can train models more successfully if you understand the difference.

1. Loss Function

-

The error for a single data point is measured using a loss function.

-

For a single example, it indicates how inaccurate your model's forecast is in comparison to the actual value.

-

For instance, the loss function determines the error for a single prediction if your model predicts a house price of $300,000 while the actual price is $320,000.

-

Consider it an error measurement at the micro level.

2. Cost Function

-

The average of the loss function for every data point in your dataset is called a cost function.

-

It provides a summary of your model's overall performance throughout the whole dataset.

-

For instance, the cost function determines the average inaccuracy from all estimates if your dataset has 1,000 home prices.

-

Consider it a macro-level error metric that directs model training.

Key Difference

-

Loss function: Error for one sample

-

Cost function: Average error for the whole dataset

The loss function is computed for each prediction in order to construct the cost function, which is used in practice by machine learning algorithms to direct optimization and parameter changes.

Choosing the Right Cost Function in Machine Learning

Because it directly impacts how well a machine learning model learns from data, choosing the appropriate cost function is essential. Making the right decision guarantees more consistent training, improved predictions, and quicker learning. The following six factors will help you select the appropriate cost function:

1. Type of Problem

The first factor is the kind of problem you are solving.

-

Regression problems (predicting numbers like house prices) usually use Mean Squared Error (MSE) or Mean Absolute Error (MAE).

-

Classification problems (predicting categories) often use cross-entropy or hinge loss.

2. Sensitivity to Outliers

Some cost functions are more sensitive to outliers than others.

-

Large mistakes, which could be impacted by outliers, are severely penalized by MSE.

-

MAE is a superior option for noisy datasets since it is more resilient to outliers.

3. Model Type

Different models work better with different cost functions.

-

MSE is frequently used for regression in both linear regression and neural networks.

-

For classification, Support Vector Machines (SVMs) frequently employ hinge loss.

-

For multi-class predictions, cross-entropy is frequently used by deep learning models.

4. Data Distribution

The distribution of your data can influence which cost function to choose.

-

MSE performs effectively when mistakes are normally distributed.

-

MAE or Huber loss may be more suitable for steady training when dealing with skewed or irregular data.

5. Optimization Stability

Some cost functions create smoother gradients for optimization, while others may cause instability.

-

MSE offers gradients that are smooth, which facilitates optimization.

-

Convergence may be slowed down by MAE's ability to produce sudden gradient shifts.

6. Task-Specific Requirements

Sometimes, real-world tasks require specialized cost functions.

-

When certain classes are more crucial, weighted loss functions come in handy.

-

In deep learning, custom loss functions enable models to concentrate on particular object recognition or time-series forecasting tasks.

Real World Applications of Cost Functions

In practical machine learning, cost functions are essential because they enable models to lower mistakes, enhance predictions, and produce outcomes in a variety of industries. Here are a few typical uses:

1. Recommendation Systems

Cost functions improve the accuracy of movie, product, and music recommendations by assisting algorithms in predicting user preferences.

2. Fraud Detection

Cost functions are used by models to detect fraudulent transactions, which lowers errors and strengthens financial security.

3. Medical Diagnosis

Cost functions assist physicians make better treatment options by guiding algorithms to accurately forecast diseases.

4. Autonomous Vehicles

Cost functions are used by self-driving cars to maximize predictions for obstacle detection and safe driving.

5. Stock Market Predictions

Using cost functions, models reduce stock price prediction mistakes to improve investment choices.

6. Natural Language Processing (NLP)

Cost functions aid NLP models in minimizing errors in sentiment analysis, chatbots, and translation jobs.

Challenges with Cost Functions in Machine Learning

Cost functions provide a number of difficulties even though they are crucial for training machine learning models. Designing better models and enhancing performance are made easier with an understanding of these difficulties. These are the primary problems:

1. Vanishing Gradients

In deep networks, gradients can get incredibly tiny, which hinders efficient parameter updates and slows learning.

2. Exploding Gradients

The model may diverge rather than learn successfully because too unstable updates caused by extremely large gradients.

3. Local Minima

Models may be trapped in local minima by non-convex cost functions, resulting in less-than-ideal performance.

4. Overfitting

Overfitting can result from overoptimizing training data costs, which lowers accuracy on unknown data.

5. Choosing the Wrong Cost Function

Inappropriate cost functions can impede learning and result in subpar model predictions.

6. Computational Complexity

Certain cost functions require more resources and take longer to train because they are computationally demanding.

Best Practices for Using Cost Functions

Building accurate models requires the proper use of cost functions. Smooth training, quicker convergence, and more accurate forecasts are ensured by an understanding of machine learning in cost functions.

1. Choose the Right Cost Function

Choose a cost function that fits the nature of your issue. Accurate learning and error minimization are ensured by comprehending machine learning in cost functions.

2. Normalize or Scale Data

To prevent big values from taking over, normalize features before training. In order to achieve stable learning, this helps machine learning in cost function optimization.

3. Monitor Training Loss

During training, monitor cost function values to identify problems early. Effective machine learning in cost function methods is demonstrated through monitoring.

4. Experiment with Different Cost Functions

Experiment with various cost functions and carefully modify hyperparameters. For optimal performance, this stage demonstrates practical machine learning in cost function knowledge.

5. Use Regularization if Needed

To avoid overfitting and enhance generalization, include regularization parameters. This facilitates consistent model learning across various datasets.

6. Fine-Tune Hyperparameters

To successfully maximize convergence, stability, and overall model prediction accuracy, adjust learning rate, batch size, and other parameters.

Future of Cost Functions in Machine Learning

Cost functions in machine learning have a bright future that will lead to more intelligent models, quicker learning, and more precise forecasts in a variety of global industries.

-

Models will be able to properly and effectively adjust to distinct datasets thanks to custom cost functions.

-

Without human interaction, automated optimization will choose the optimal cost function for every given situation.

-

For more accurate text, audio, and image predictions, deep learning will make use of sophisticated cost functions.

-

During deployment, adaptive cost functions will assist real-time systems in making constant adjustments and improvements.

Understanding cost functions will be crucial for developing strong, dependable, and creative AI solutions as machine learning develops.

Cost functions are a subtle but effective tool for model learning and development. They direct changes and gradually improve models by highlighting the precise areas where forecasts fall short. Developers can select the best strategy, guaranteeing quicker learning and more precise outcomes, by having a thorough understanding of machine learning in cost functions. Cost functions in machine learning assist models in reducing errors and making trustworthy conclusions in a variety of tasks, from fraud detection to home price prediction. Models remain effective when the proper methods are used, and performance is closely monitored. In the end, anyone may create smarter, more efficient AI systems by learning Cost Functions in Machine Learning.